Ever wonder what your pets are up to when you’re not looking? Physicist and Director of Innovation, Dr. Michael Rissi has taken hutch-monitoring to the next level by developing a multi-label visual recognition system. Being a personal subscriber of DECTRIS CLOUD and using it for his hobby sciency projects in his free time, he used the power of DECTRIS CLOUD, to track three specific bunnies named Bruenli, Flocki, and Flipsy.

The Technical “Carrot”

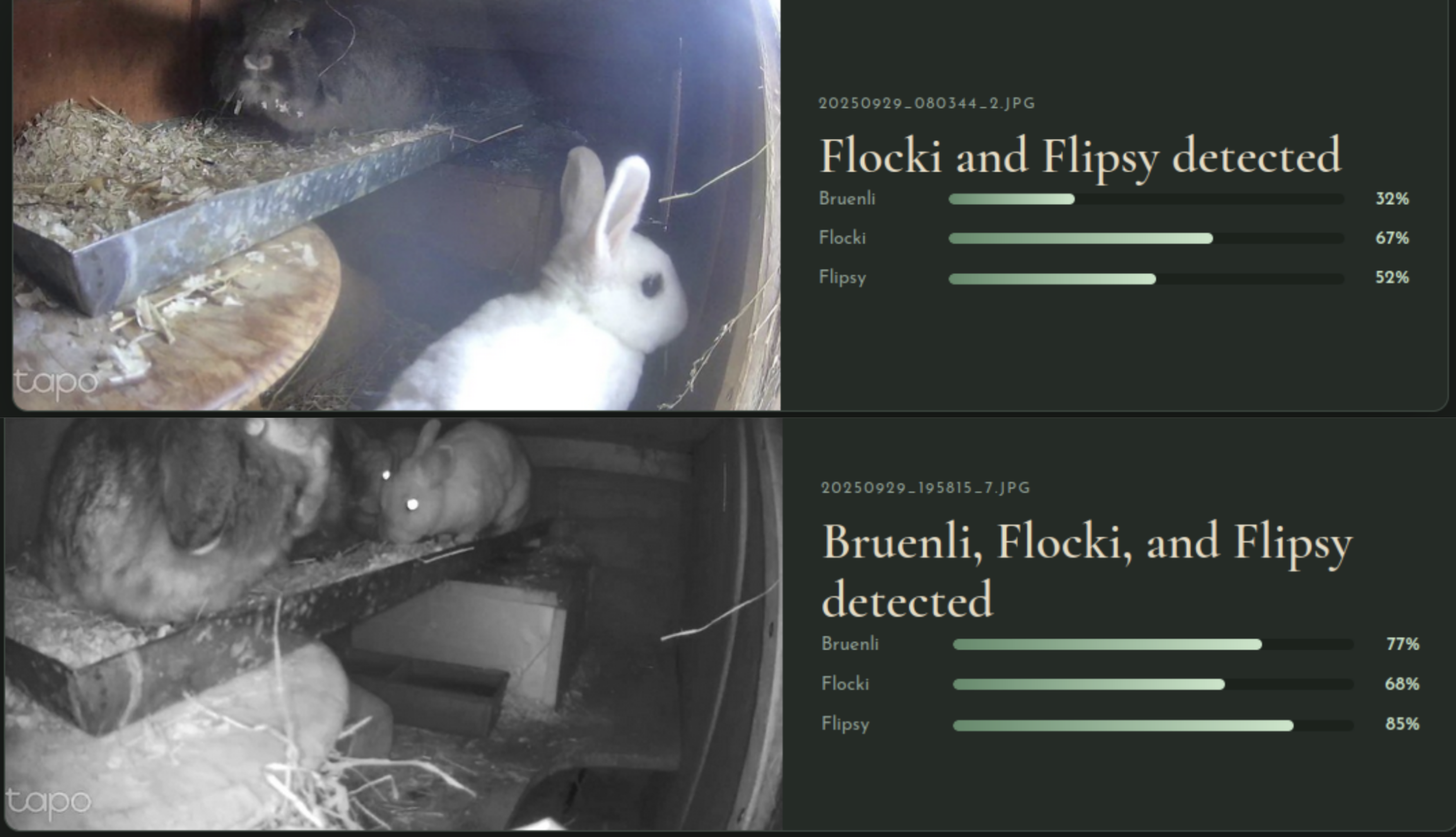

Using a dataset of over 11,000 unique frames captured from a Tapo enclosure camera, the project treats bunny identification as a multi-label classification problem. Instead of just spotting a bunny, the model determines the simultaneous presence of each individual.

- Architecture: The system uses transfer learning with an ImageNet-pretrained EfficientNet-B0 backbone.

- Efficiency: The backbone remained frozen while only the final 3-class linear layer, consisting of 3,843 parameters, was trained.

- Compute: Training and inference were accelerated on DECTRIS CLOUD using 4x NVIDIA A10G GPUs (our supersonic instance) for a total training time of 2 hours.

Image: Examples of the system in action, showing how it identifies each bunny and provides a confidence score for its prediction. The model successfully spots individuals like Flipsy and Flocki even when they are moving or in different parts of the hutch

Behind the Scenes: Model Training

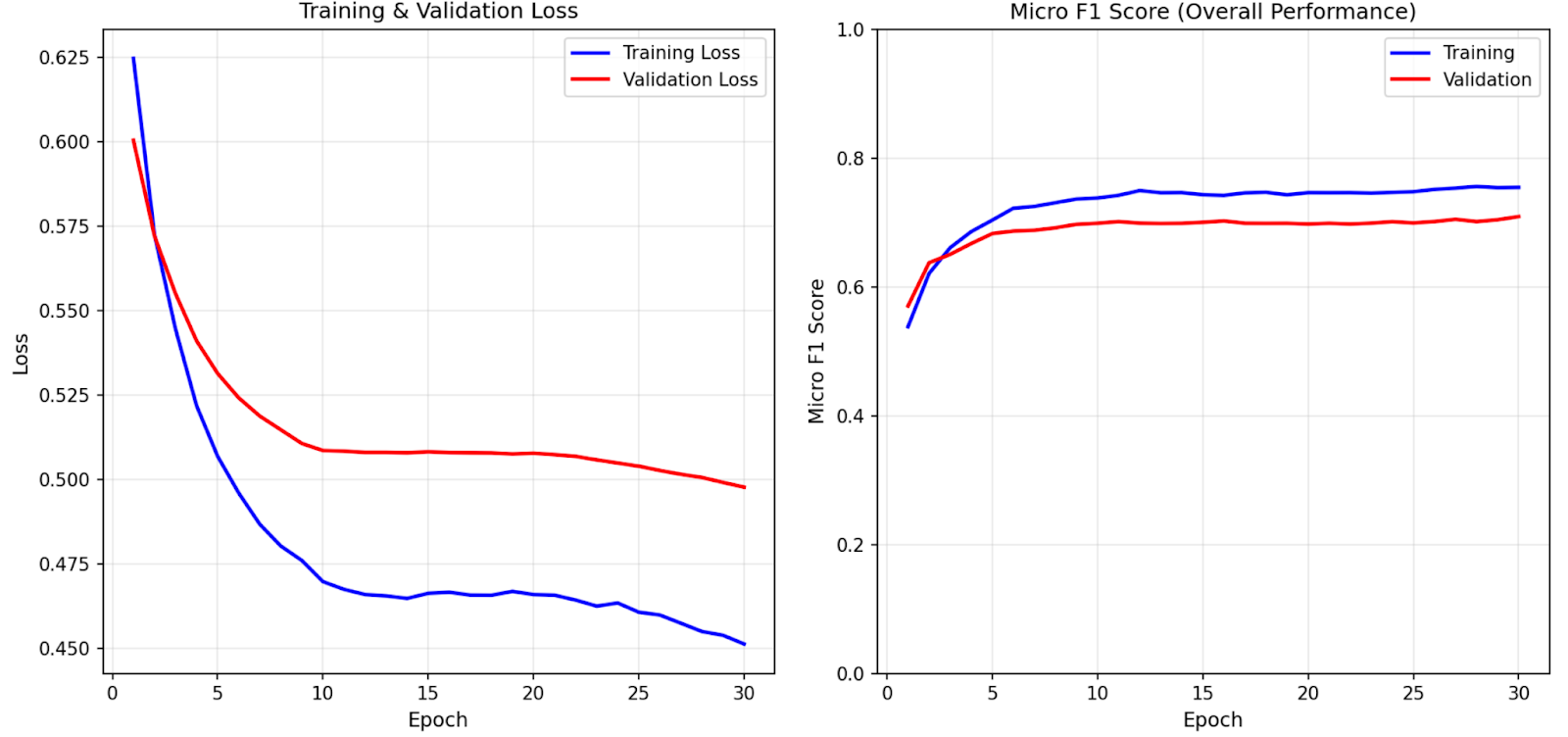

To ensure the classifier was reliable, the model was trained over 30 epochs using the AdamW optimizer.

Figure: These plots illustrate the model's progress over 30 epochs. The loss curve shows the error decreasing, while the F1 score highlights the steady improvement in classification accuracy as the system learned to distinguish between the three bunnies. Training loss being below validation loss shows that the model was slightly overfitted.

Real-World Results

The system reached a micro-averaged F1 score of 0.705 during evaluation on a held-out test set. This performance proves the model can reliably identify individual bunnies within unstructured hutch environments. To make the prediction more robust, overfitting prevention can be applied, e.g. by further regularization and data augmentation. To enhance predictive robustness and further mitigate overfitting, future iterations can implement techniques such as dropout, early stopping, or advanced image augmentation (e.g., random rotations and color jittering) to account for the visual complexity of the hutch environment. Nevertheless for the given application, the prediction robustness is sufficient.

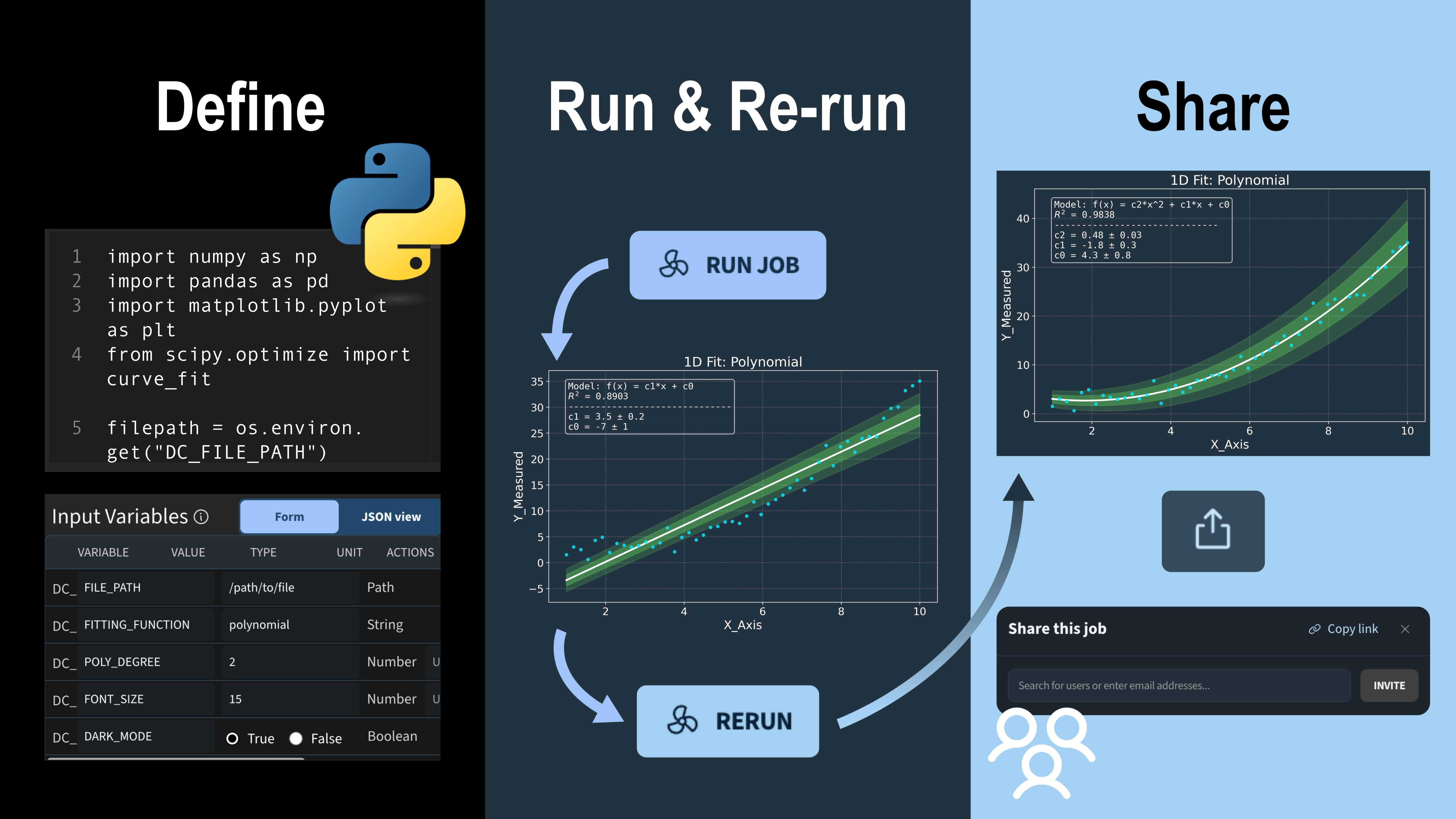

Beyond simple detection, the project proposes an automated pipeline using the DECTRIS CLOUD Jobs API to log behavioral profiles and perform hutch return checks to ensure every animal is safely indoors at night. Whether used for monitoring pets or analyzing complex scientific data, this workflow demonstrates the ease of deploying deep learning models on our infrastructure. As our colleague noted, it is still an open research question if the bunnies actually enjoy being under constant surveillance.

Key References

- EfficientNet: Tan, M. & Le, Q.V. (2019). EfficientNet: Rethinking Model Scaling for Convolutional Neural Networks. ICML 2019.

- PyTorch: https://pytorch.org/

- TorchVision EfficientNet: https://pytorch.org/vision/stable/models.html

![Transition from raw detector images to electron density maps and refined atomic models in Coot. Depicted data is from the 7QNP dataset [14]. The 3D molecule rendering has been produced with the Render Molecule job template, which utilizes the MolecularNodes Blender plugin developed by Brady Johnston.](https://cdn.prod.website-files.com/670f957a3be26367c038245d/69c698806079c5f5265daa1a_thumbnail_v3%20(1).png)